On teaching evaluations

At the end of each term, university students rate their teachers. The stated goal of these evaluations is to measure teaching quality. The idea is that accurate feedback would encourage teachers to improve their craft. Do teaching evaluations achieve this goal, or are they merely an inefficient or potentially even harmful activity?

In trying to answer this question, we need to address three sub-questions. If the response to any of the three sub-questions is no, the validity of using teaching evaluations to measure teaching quality is compromised.

The three sub-questions:

1. Is teaching quality measurable?

2. Do existing questionnaires measure teaching quality?

3. Can average students judge teaching quality?

Is teaching quality measurable?

Imagine we put 30 physics professors in a room, show them the recordings of a course on physics, and ask them to rate the lecturer. There will surely be differences in ratings, but I suspect the average response will correlate quite well with teaching quality. I expect this to be the case not just in physics but in most other fields as well.

Do existing questionnaires measure teaching quality?

The magnitude of an earthquake can be measured with a seismometer. Good luck trying to do it with a ruler or a sound level meter. Similarly, it could be the case that teaching quality is measurable, but student evaluations (as currently implemented) aren’t the right tool for the job. To judge if they are, we can take two approaches. The first approach is to analyze the list of questions in a typical teaching evaluation form.

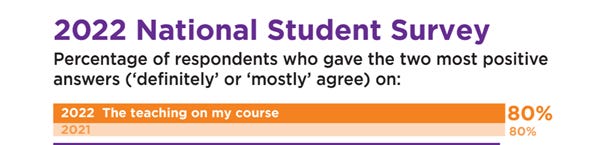

Consider the UK. Every year, all university students are invited to complete the National Student Survey, one section of which is about teaching quality. Below are the questions in this section, taken from the 2022 survey. (The full list of questions is available here in PDF.)

If I were to draft questions to measure teaching quality, I would exclude some from the existing list (especially question 4), I would include some others, and I would modify the wording of some of the existing ones. If I were to publish my updated list, others might in turn reasonably disagree with my selection of questions. Rather than going down this inherently subjective and contentious path, I suggest taking a different approach.

The second approach involves applying a smell test to the results. We expect teaching quality data to have certain characteristics. When we don’t find these characteristics in the data, it’s probably because we have data on something other than teaching quality. It’s not important what that ‘something’ is, as we only care about the fact that it’s not teaching quality.

One of the characteristics we expect of the data is variability. This survey is based on almost 400 institutions, covering the whole higher education scene in the UK. Some institutions surely have much better teaching than others. If we observe low variability in the data, we should be suspicious because low variability goes against our prior belief that teaching quality varies widely.

Let’s first look at how students rated teaching quality overall, then zoom in on one of the constituent questions of teaching quality.

Students rated teaching as uniformly good, leaving us with an apparent contradiction between the survey data and our prior belief. There are two ways out of this contradiction: either our prior belief is wrong, or these questions don’t measure teaching quality. If you believe the first, you may as well advise your friends to apply to practically any unknown UK university for its supposedly excellent teaching. I’m afraid your friends might be in for a costly disappointment if they decide to follow your advice.

Can average students judge teaching quality?

Some people (e.g., physics professors, top students) can judge teaching quality, but I suspect average students can’t. Let me give two reasons why.

The Doctor Fox Lecture

The first reason is that students might mistake unimportant cues of teaching for important ones. An experiment from 1973 illustrates this point well. In the experiment, conducted with an experienced group of educators, the authors were interested in how an audience evaluates teachers. They picked a lecturer, Dr. Myron L. Fox, an authority on the application of mathematics to human behavior, and asked him to give a lecture on his topic ‘Mathematical game theory as applied to physician education’.

After the one-hour lecture and a half-hour subsequent discussion, the audience evaluated him on various dimensions. Overall, they were happy with his performance, with most of them claiming that Dr. Fox stimulated their thinking and presented his material in an interesting way.

One might reasonably argue that students giving positive ratings to a lecturer doesn't necessarily prove that they are poor at evaluating teaching ability. After all, couldn't it be possible that Dr. Fox was a genuinely exceptional teacher? In a typical situation, it could easily be the case. But this was far from a typical situation, and Dr. Fox was not a typical lecturer. He was, in fact, a professional actor hired to deliver a lecture. Furthermore, he was coached to make as little sense as possible, by

present[ing] his topic and conduct[ing] his question and answer period with an excessive use of double talk, neologisms, non sequiturs, and contradictory statements. All this was to be interspersed with parenthetical humor and meaningless references to unrelated topics.

The takeaway from this experiment is that people often place undue weight on certain easily observable but non-predictive cues, especially when they evaluate something they aren’t familiar with.

Great on one level, bad on another

The second reason average students may be bad at identifying good teaching is that it’s difficult to know what’s important in a field until one becomes knowledgeable in that field. Imagine a course on WWII. The lecturer can explain exquisitely the Battle of Kursk or the military capabilities of the combatants. It would constitute bad teaching, however, to focus disproportionately on these two topics at the expense of other, potentially more central themes. Average students fail to recognize bad teaching because they don’t know what other topics the lecturer should have prioritized instead.

Conclusion

My takeaway is that student evaluations (as currently implemented) don’t measure teaching quality. Moreover, whether average students could judge teaching quality even with an appropriately designed questionnaire is questionable.

One possibility is that these evaluations pose an administrative and logistical burden but create no other harm. A more pessimistic view, which I will explore in a future post, is that they not only waste time and resources but can be harmful by introducing perverse incentives for both institutions and teachers.