9 > 221 and why study methodology matters

A study, written by a well-known psychologist, found that people think 9 is bigger than 221. This result would make for a great headline. It pleases those who like complaining about the sorry state of research. At first glance, this seems like the definition of a surreal study with an impossible conclusion. It also pleases those who take great joy in pointing out others’ apparent stupidity and innumeracy.

Unfortunately for those prone to schadenfreude, this study shows neither that research is bad, nor that people are innumerate. Instead, it shows something much more mundane: study design matters. To drive this point home, the author of this study came up with a clever idea. He deliberately chose a misleading study design. By showing that the study produces an impossible conclusion, he drew attention to the importance of getting the study design right.

When someone hears that people considered 9 bigger than 221, they imagine the following experiment: People were shown the numbers 9 and 221 side by side and were asked to pick the larger one. This is an eminently sensible guess. But this is not what happened in this study. The study asked one set of people to rate the bigness of the number 9. It asked a different set of people to rate the bigness of the number 221. It then compared the two ratings, concluding that the average rating was higher for 9 than for 221.

So, any given person rated only one of the two numbers, but not both. This type of study design, where two groups of people experience different things, can be the ideal way to study many important questions, including the effectiveness of drugs and policies. But unlike drug trials, which have objective outcomes, rating the bigness of a number is a subjective enterprise. Big is a relative term, and two groups may use very different reference points to judge bigness. This can then lead to impossible conclusions like 9 being bigger than 221.

As the paper's author explains, the numbers 9 and 221 invoke different contexts. The number 9 makes people think of single-digit numbers. They then say that 9 is big because it’s indeed big for single-digit numbers. In contrast, the number 221 makes them think of 3-digit numbers. They then say 221 is small because it’s indeed small for 3-digit numbers. So, paradoxically, the objectively bigger number is considered smaller. This erroneous conclusion would never arise if people compared the two numbers directly.

Wrong conclusions based on a faulty study design are not confined to artificially created contexts, cooked up by creative psychologists. They permeate daily life. One prominent example involves cross-country comparisons of subjective measures, such as happiness, satisfaction, confidence, or trust.

Let’s consider a concrete example. Imagine we wanted to know how much confidence people in Myanmar and Finland have in their country’s civil service. I chose these two countries, as they are as different as two countries can be. Moreover, the Finnish civil service outperforms its Burmese counterpart on virtually all objective measures. So, in this context, Finland is to Myanmar what 221 is to 9.

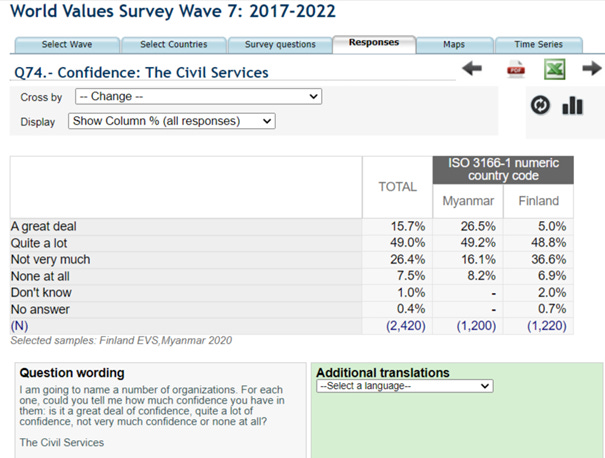

Now, let’s look at people’s subjective evaluations, as reported in the World Values Survey.

The percentage of people who have “a great deal” or “quite a lot” of confidence in the country’s civil service is 75.7% in Myanmar and 53.8% in Finland. Before calling for the resignation of the head of the Finnish civil service, we should pause. How is it possible that the objectively better Finnish system is rated worse?

In short, it’s possible because ratings are subjective, and the Burmese and the Finnish rate their country’s civil service based on different criteria. A poorly performing Burmese civil service could seem acceptable or even desirable to the Burmese if their expectations are very low. Conversely, a well-functioning Finnish system could be seen negatively by the Finnish for occasionally falling short of their unrealistically high expectations. So, comparing the two percentages is misleading because they measure different things.

For a more valid cross-country comparison, we would need to find people who experienced both civil services and ask them which country’s system they have more confidence in. Alternatively, we could try to ensure that people in both countries use the same reference point for their decision (a very difficult task) or base the comparison on objective factors that don’t suffer from reference point issues. These alternatives won’t always be possible. Luckily, refraining from dubious comparisons is a viable option in these cases—one we should perhaps exercise more frequently.